✺✺✺

The premise

Social media companies spend billions engineering recommendation algorithms that optimize for doomscroll-time.

But...

What if we wanted to optimize for doomscroll-intensity instead?

We can leverage Reinforcement Learning to train an agent to optimize the scrolling pattern with the explicit objective of maximizing predicted cortical activation across brain surface vertices during a tiktok session.

Bye bye manual scrolling.

✺✺✺

How?

1

TikTok Videos

878 videos selected from the TikTok-10M dataset

Clustered with K-means

2

Brain Model

TRIBE v2 FmriEncoder predicts cortical response.

20,484 surface vertices

3

RL Scrolling Agent

PPO learns when to scroll and what video to select next

Reward = avg activation + delta

We simulate how each moment in a TikTok scrolling session would activate the brain with a pretrained FmriEncoder, derive a heuristic for dopamine usage from the activations to use as a reward signal, and then train an RL agent (PPO-Clip) to discover the scrolling behavior and recommendation algorithm that produces the highest overall brainrot.

Architecture

Full RL data flow from raw video, through the FmriEncoder transformer, brain activation, reward computation, and PPO agent actions: scrolling and video selection.

Feature Extraction (Preprocessing)

- •V-JEPA 2 — 8 layer groups × 1,280-dim visual features

- •Wav2Vec-BERT 2.0 — 9 layer groups × 1,024-dim audio features

- •Concatenated per-modality, projected into shared 1,152-dim space

Brain Model (runs during RL)

- •FmriEncoder: 8 transformer blocks with RoPE, ScaleNorm, scaled residuals

- •Low-rank projection head → 2,048 dims before final prediction

- •Subject-averaged readout layer → 20,484 cortical surface vertices (fsaverage5)

Reward Signal

- •DopamineReward: α × mean(|activation|) + β × ‖Δactivation‖

- •CortisolReward variant: region-weighted activation (anterior-ventral 2.2×)

- •Optional switch penalty + minimum dwell time enforcement

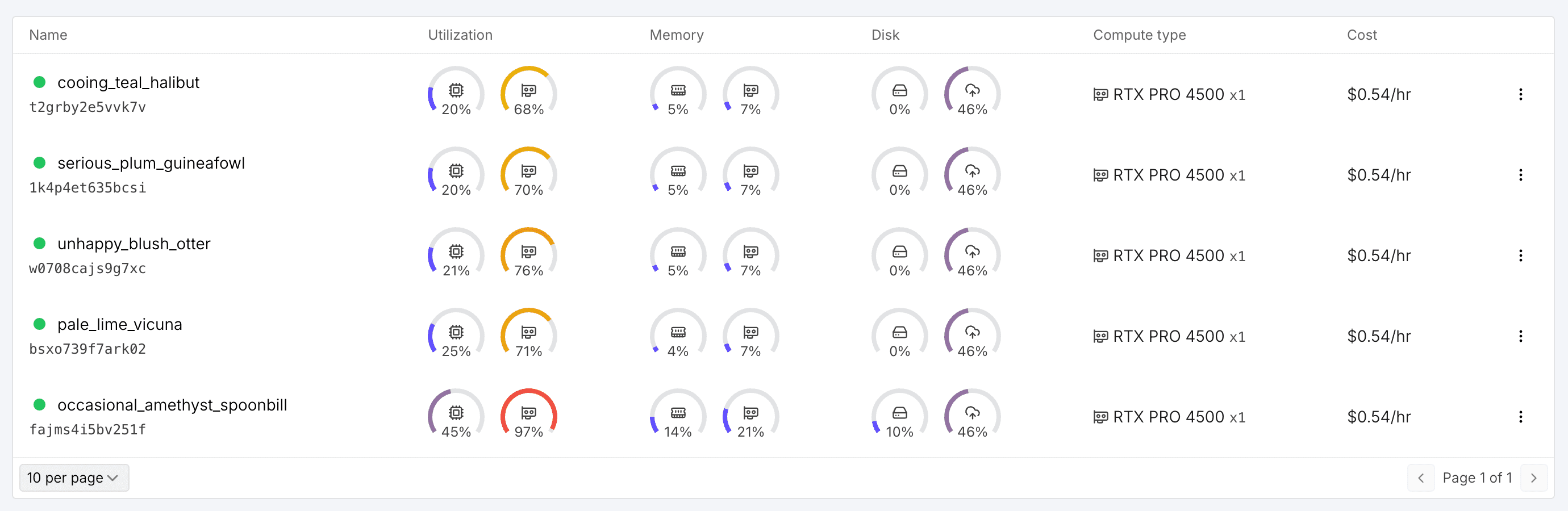

Trained variants

We explore three agent configurations with different RL setups and reward functions.

select_baseline

Baseline select policy — PPO + MLP, no penalties, dopamine reward

select_recurrent_lstm

LSTM policy with brain region observation, has richer context

select_cortisol

Cortisol reward: weights anterior-ventral regions 2.2× for stress-like activation

Training was run on 5x RTX PRO 4500 instances for about 12 hours. About $30.

✺✺✺

Training Results

They actually learn! Kinda. Here's the metrics from training the agents on 878 TikTok videos, each for 500K timesteps (equivalent to ~70 hours of doomscrolling).

They basically learned to reward hack the env by scrolling as fast as possible (2Hz, the brain models frequency), which conveniently seems to fry the brain the most. They learn this behavior even when they have explicit penalties for fast scrolling. Technically speaking, this is what we asked for though.

Charts load as you scroll.

✺✺✺

Demo

Loading real agent rollouts...

✺✺✺

What have we done?

how it feels to scroll manually

how it feels to let ai scroll for you

We have created the optimal doomscroller, applying literal state-of-the-art technology to the problem of maximizing dopamine depletion. This could counter-intuitively save you time and energy. Possibly. Use the model responsibly.